I have just released v1.6.0 of the BRE Pipeline Framework to the CodePlex project page. The framework is now mature enough that it’s core has not changed much this time around, but there are still tons of new features and optimizations to be found in this version. For a recap on what the framework is all about you can read the primer or the provided documentation.

Before I dive into the new features, here are some steps to get started. If you’re installing the framework for the first time or upgrading from a version above v1.4.0 then all you need to do is download the installer, uninstall the previous version if applicable, run the new installer to completion, and then import the BREPipelineFramework.LatestVocabs.xml vocabulary definition file from the vocabulary subfolder in the program files folder (default location is C:\Program Files (x86)\BRE Pipeline Framework\Vocabularies). If you are upgrading from a version older than v1.4.0 then you might be forced to stop your BizTalk host instances since older versions of the pipeline component were not signed with a strong named key and installed in the GAC.

Onto the new features.

HTTP Header Manipulation

The BREPipelineFramework.SampleInstructions.ContextInstructions vocabulary already has vocabulary definitions that allow you to read in the WCF.InboundHttpHeaders context property to read in inbound headers, or to set the WCF.HttpHeaders context property to set outbound headers. However what you’ll find is that reading or setting HTTP headers from these context properties is not exactly easy as each context property actually contains a delimited list of all the inbound or outbound HTTP headers as per the following example. Getting to an individual HTTP header can be tricky if you try to use these vocabulary definitions.

32d6f253-de53-4cf3-b559-1ec34ac1ad28

Important_User: True

Content-Length: 328

Content-Type: application/x-www-form-urlencoded

Expect: 100-continue

Host: servicebusrelaytest.servicebus.windows.net

Authorized_Token: 32d6f253-de53-4cf3-b559-1ec34ac1ad28" Namespace="http://schemas.microsoft.com/BizTalk/2006/01/Adapters/WCF-properties" Promoted="false" />

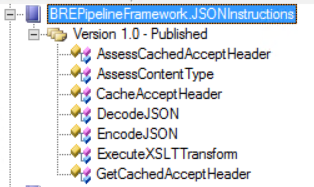

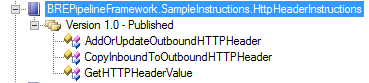

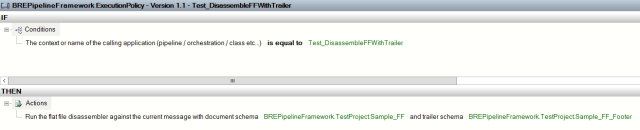

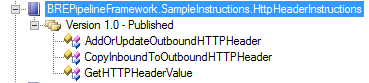

So now there is a new vocabulary called BREPipelineFramework.SampleInstructions.HttpHeaderInstructions which gives you the below vocabularies that allow you easier access to get or set inbound or outbound HTTP headers.

The following screenshot demonstrates how these vocabulary definitions can be used. As you’ll see, you can read in inbound HTTP headers, explicitly set outbound HTTP headers, or copy inbound HTTP headers to outbound HTTP headers. You can set as many outbound HTTP headers as you want, and the framework will roll them all up into the single context property for you.

Dynamic Pipeline Component Execution

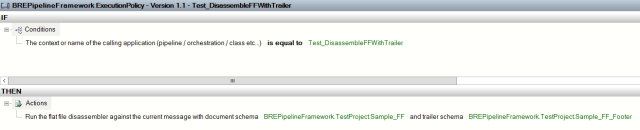

This version of the framework now allows you to dynamically execute some of the out of the box Microsoft pipeline components. This feature was provided as a direct result of an issue posted on the framework’s page that asked for the ability to have context properties promoted on the target message when performing dynamic transformation using the framework. This would only really be possible by executing the XML Disassembler pipeline component, so the framework now has the ability to execute this component and some others. You will be able to execute these pipeline components from within the new vocabulary BREPipelineFramework.SampleInstructions.PipelineInstructions.

As you’ve seen in the previous screenshot, you can now execute the XML Assembler, XML DIsassembler, Flat File Assembler, Flat File Disassembler, and XML Validator pipeline components dynamically. This means that you can assess conditions that allow you to decide whether/which pipeline components you should execute, and moreover you can dynamically configure parameters for these pipeline components as well. The following screenshot demonstrates how you can execute the Flat File Disassembler while specifying the trailer schema parameter value.

One thing to note is that the BRE Pipeline Framework pipeline component is not a disassembler, so if you choose to execute a disassembler pipeline component and it ends up debatching an envelope message, the pipeline component will only pass through the first disassembled message. In the case of the XML Disassembler, if you don’t want the message to be debatched you can make use of the DisassembleXMLMessagePropertyPromotionOnly vocabulary definition, which will result in context properties being promoted against the message without any debatching taking place.

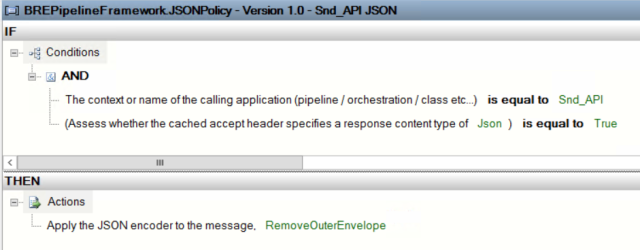

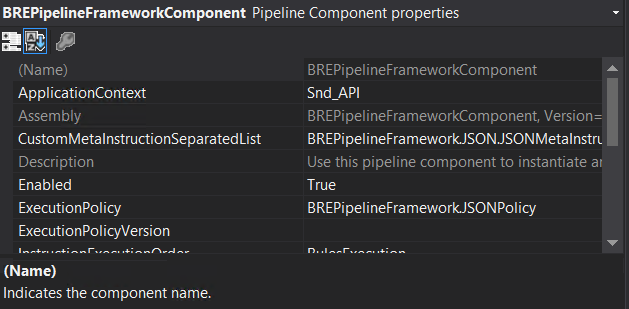

Easier assertion of custom MetaInstructions

In the past, if you wanted to use your own custom MetaInstructions and corresponding vocabularies (see the project document page for more info on creating these) you would have to use an Instruction Loader Policy to assert the MetaInstruction fact so that it could be used in your Execution Policy (the BRE Policy in which you assess and manipulate your message’s context and content). In the following screenshot you’ll see how you a rule in an Instruction Loader Policy is used to assert a custom MetaInstruction fact into an Execution Policy by specifying the fully qualified class name and fully qualified assembly name of the MetaInstruction class.

With v1.6.0 of the framework there is now a new pipeline component parameter called CustomMetaInstructionSeparatedList within which you can provide a semicolon separated list of fully qualified class/assembly names in the below format.

BREPipelineFramework.TestSampleInstructions.MetaInstruction, BREPipelineFramework.TestSampleInstructions, Version=1.0.0.0, Culture=neutral, PublicKeyToken=83eab0b166470ebc;BREPipelineFramework.TestSampleInstructions.MetaInstructionCopy, BREPipelineFramework.TestSampleInstructions, Version=1.0.0.0, Culture=neutral, PublicKeyToken=83eab0b166470ebc

This makes usage of custom MetaInstructions a whole lot easier to manage. You might still want to use an Instruction Loader Policy if any of the below scenarios applies to you.

- You only want to conditionally assert the custom MetaInstruction in certain scenarios

- You want to assert XML or SQL based facts

- You want to conditionally override the ExecutionPolicy or the version

Assorted changes

There’s a couple more interesting new features across a variety of vocabularies.

In the BREPipelineFramework.SampleInstructions.CachingInstructions vocabulary, you’ll now find a vocabulary definition called GetCachedValueFromSSOConfigStore. The first time this vocabulary definition is executed it will read in a value from an SSO configuration store and it will also cache it. On further use of the vocabulary definition it will read the cached value rather than the SSO configuration store, keeping the number of hits to the SSO database to a minimum. By default the values will be cached for 30 minutes, but you can override this through the use of the UpdateCacheExpiryTime vocabulary definition in the same vocabulary.

There is also a new vocabulary definition GetValueFromSSOConfigStore in the BREPipelineInstructions.SampleInstructions.HelperInstructions vocabulary which will read in a value from an SSO configuration store without caching the result. This is probably useful in scenarios where you expect that you will be updating the configuration values regularly and always want to be reading in the latest value.

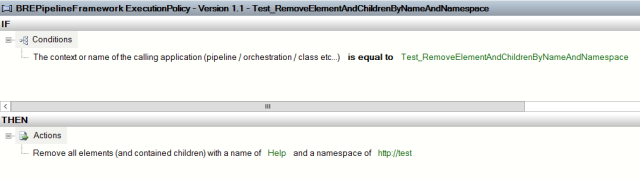

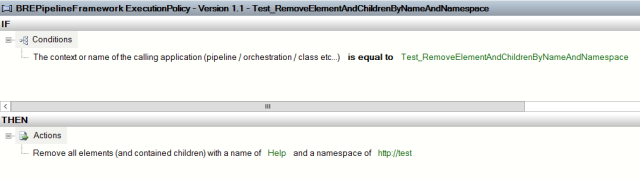

There are two new vocabulary definitions in the BREPipelineFramework.SampleInstructions.XMLTranslatorInstructions vocabulary that allow you to remove an element and all child elements from the XML message being processed, in a fully streaming manner. These are the RemoveElementAndChildrenByName and RemoveElementAndChildrenByNameAndNamespace vocabulary definitions. An example of their usage is below. No exception will be thrown in an element matching the criteria you set is not found in the message, so this vocabulary can be used liberally.

There are also two new vocabulary definitions ReturnFirstRegexMatchInString and ReturnRegexMatchInStringByIndex that allow you to run regular expressions against strings (these can be any strings, you could potentially chain together vocabulary definitions to run the regular expression against a context property for example).

V1.6.0 of the framework also has more tracing all over the place which will make it easier to understand what is going on within your BRE rules.

Optimizations

Finally, there have been some optimizations made to the framework. All reflected types for custom MetaInstructions and maps being executed dynamically will now be cached rather than having their types reflected every single time. This results in improved runtime performance of the framework.